Businesses are rapidly investing in AI to improve automation, decision-making, and operational efficiency, but building a successful ML system involves much more than training a model. The real challenge lies in deploying, managing, monitoring, and continuously improving models in real-world environments. According to Gartner, over 80% of enterprises are expected to use generative AI APIs or deploy AI-enabled applications by 2026. As AI adoption grows, organizations are focusing more on managing the complete machine learning lifecycle efficiently.

A poorly managed ML project can lead to inaccurate predictions, rising costs, deployment failures, and compliance risks. This is why enterprises are prioritizing machine learning lifecycle management to ensure AI systems remain scalable, reliable, and business-focused over time. In this blog, we will explore the machine learning lifecycle stages, major enterprise challenges, optimization strategies, and future trends shaping modern AI systems.

Understanding the Machine Learning Lifecycle

The machine learning lifecycle is the complete process of developing, deploying, managing, and improving machine learning models. Unlike traditional software systems that operate on fixed rules, machine learning systems learn from data and evolve continuously over time.

A structured implementation of a machine learning development lifecycle provides organizations with improved reliability in the deployment of their systems, fewer operational challenges, and long-term effectiveness of their models. The general framework for implementing a machine learning lifecycle will typically include discovery/business analysis, data preparation, model development, deployment, long-term performance monitoring, and continuous model optimization.

Many enterprises underestimate the effort required after deployment. In reality, monitoring, retraining, and governance often require more resources than the initial model development itself.

Traditional Software Lifecycle vs Machine Learning Lifecycle

Traditional software applications and machine learning systems function very differently. While traditional software relies on predefined rules and fixed logic, machine learning systems continuously learn from changing data patterns.

| Traditional Software Development | Machine Learning Lifecycle |

|---|---|

| Rule-based systems | Data-driven systems |

| Fixed logic | Continuously evolving models |

| Easier testing | Complex validation requirements |

| Stable outputs | Probabilistic outputs |

| Focus on code quality | Focus on data and model quality |

| Minimal retraining | Continuous retraining required |

This difference explains why AI projects require stronger monitoring, retraining, governance, and lifecycle management practices.

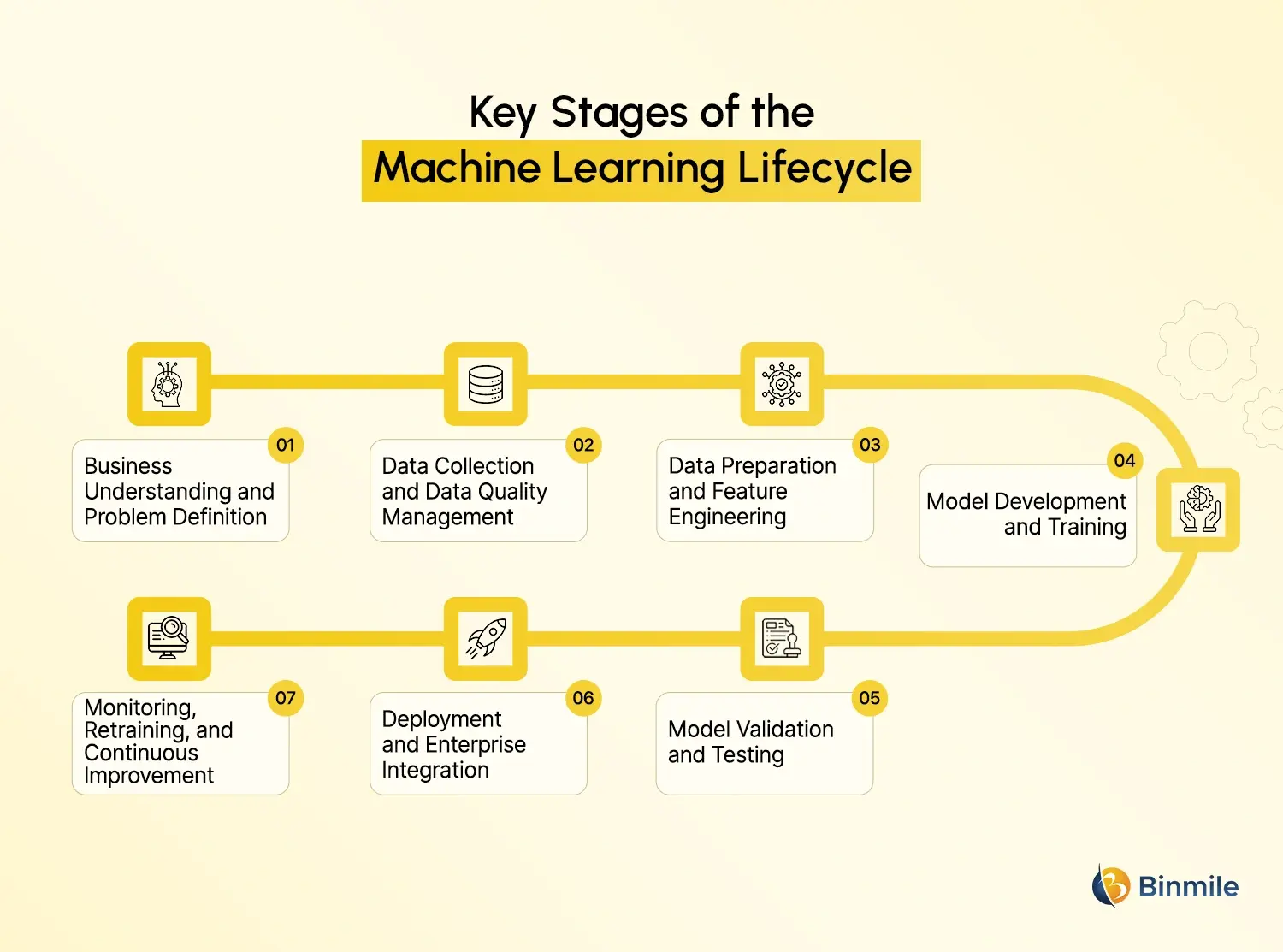

What Are the Key Stages of the Machine Learning Lifecycle

The machine learning lifecycle consists of multiple interconnected stages that help organizations build, deploy, manage, and continuously improve AI systems efficiently.

-

Business Understanding and Problem Definition

To start any machine learning project successfully, it is important to have an understanding of the business situation and clear goals. Organizations decide how they will measure success, evaluate if machine learning will be the best solution, and align their technical efforts with business objectives. A good foundation at the beginning of a machine learning project will help to prevent unnecessary challenges in development later on.

-

Data Collection and Data Quality Management

The quality of the data, as well as its quantity, is essential to the success of an ML system. Since every machine learning system is built on data, having accurate, consistent data will result in the best predictions that can be achieved. Organizations generally gather data from many sources (enterprise systems, APIs, cloud, and devices), and frequently those different sources produce fragmented datasets that can cause operational issues. Good data governance and good validation will both help to improve the reliability of the data and, therefore, improve business outcomes.

-

Data Preparation and Feature Engineering

Before starting model training, raw data needs to be cleaned, standardized, and organized in the correct format. During this phase, organizations need to complete activities like dealing with missing values, removing inconsistencies from collected datasets, and preparing the collected data for modeling. Through feature engineering, raw data is transformed into features that can be used by models, which will lead to a better model performance (i.e., more accurate).

-

Model Development and Training

After preparing the datasets, an organization’s team will use various algorithms and techniques to create and train a machine learning model development lifecycle on the prepared datasets. By understanding the model selection process (scalability, explainability, accuracy, and business requirements), the teams will also optimize the performance of the model during this phase, leading to better reliability for a model’s outcome in actual use.

-

Model Validation and Testing

Model validation ensures that a model has remained accurate in terms of performance and reliable in terms of non-bias after being created. There are many evaluation metrics that an organization will use to validate a model, but in addition to evaluation metrics, organizations will also consider other factors (e.g., explainability, fairness, security, and compliance) as they validate their model. Good validation processes can help reduce the risk of operations and help improve trust in AI systems.

-

Deployment and Enterprise Integration

Deployment is the process of integrating an already-trained model with a business’s live operations. During the deployment stage, the business needs to ensure readiness of the supporting infrastructure (e.g., servers and networks), workflow integration, scalability, and security. Organizations that use MLOps methodologies can streamline the deployment of AI solutions into their operational processes and improve the overall reliability of their operations.

-

Monitoring, Retraining, and Continuous Improvement

Machine learning systems become less effective as time passes because the conditions under which a business operates, and the behaviour of customers, change over time. Organizations need to constantly monitor the performance of their machine learning systems as well as margins of error and risk of data drift and bias to ensure that their systems remain accurate and reliable. Continuous retraining of the model will also help ensure that it can continue to be effective as the data it relies on continues to change and will deliver long-term business value.

Why Enterprise AI Projects Fail Without Proper Lifecycle Management

Without structured machine learning lifecycle management solutions, organizations often struggle to scale AI initiatives beyond isolated experiments.

-

Poor Data Quality

Number of different failures, including a lack of confidence in contractually obligated data in AI projects. If the pattern of data used to train an AI system has an error, the conclusions will be similarly wrong. No amount of code, cleverness, or engineering expertise can overcome the issues of poor-quality data.

-

Organizational Silos

AI Projects in machine learning will require collaboration between data scientists, engineers, DevOps, security teams, and the business/organization. If different teams operate independently from each other, delays related to deployment, gaps in communication, and confusion become common occurrences.

-

Infrastructure Complexity

Now that AI is being deployed on a broader basis, managing the environment in the cloud, managing orchestration pipelines, GIS, geographic information systems, and others is almost impossible to do. Some organizations underestimate how complex the operations are to sustain an enterprise AI system.

-

Governance and Compliance Challenges

Businesses are being pressured to keep the AI systems operated in an ethical, accountable, transparent, and assist the organization in meeting its objectives. As the expectations regarding the accountability of AI in the workplace continue to increase, it has become essential for organizations’ ML lifecycle activities to manage their responsibility related to AI and meet all regulatory requirements for AI.

Machine Learning Lifecycle vs MLOps vs LLMOps

As enterprise AI adoption grows, terms like MLOps and LLMOps are becoming more common. Although they are closely related, each serves a different purpose within AI operations.

| Concept | Primary Focus |

|---|---|

| Machine Learning Lifecycle | End-to-end ML process |

| MLOps | Operational management of ML systems |

| LLMOps | Operational management of large language models |

MLOps combines machine learning with DevOps principles to improve deployment automation, collaboration, reproducibility, and model monitoring. It focuses heavily on CI/CD pipelines, workflow automation, and lifecycle governance.

LLMOps is a more specialized discipline designed for large language models and generative AI systems. It includes prompt management, vector database integration, inference optimization, AI safety controls, and hallucination monitoring.

As generative AI adoption increases, LLMOps is becoming a critical component of enterprise AI strategies.

Looking to scale enterprise AI systems without deployment bottlenecks or operational complexity?

How to Optimize the Machine Learning Lifecycle

Organizations that successfully scale AI systems focus heavily on lifecycle optimization rather than only model accuracy.

-

Automate Repetitive Processes

Automation allows companies to enhance the efficiency of their operations with fewer mistakes caused by people. More and more often, businesses are automating processes like preprocessing data, re-training models, deploying workflows, testing, and monitoring alerts that will speed up the lifecycle of developing machine learning solutions.

-

Strengthen Data Governance

A strong data governance framework will provide you with a foundation for creating consistent, secure, compliant, and observability into how AI has been used. As the use of AI grows within the enterprise, this becomes even more critical as you will be storing sensitive employee and/or customer information.

-

Build Reusable ML Pipelines

Resource reuse will help provide better scalability and lower duplication, making it easier to execute on projects. Standardized workflows will also provide organizations with a framework to innovate faster across multiple AI initiatives.

-

Focus on Explainable AI

Businesses and regulators are both requiring transparency in AI decisions. Explainable AI will improve trust, lower the burden of auditing, and lower compliance risk while providing organizations an opportunity to better understand how to build effective and accurate models.

-

Measure Business Impact

Organizations should not evaluate the success of their machine learning initiatives solely on whether or not they are accurate; they should also analyze how AI systems will improve operational productivity, revenue growth, customer satisfaction, decrease fraud, and improve overall business performance.

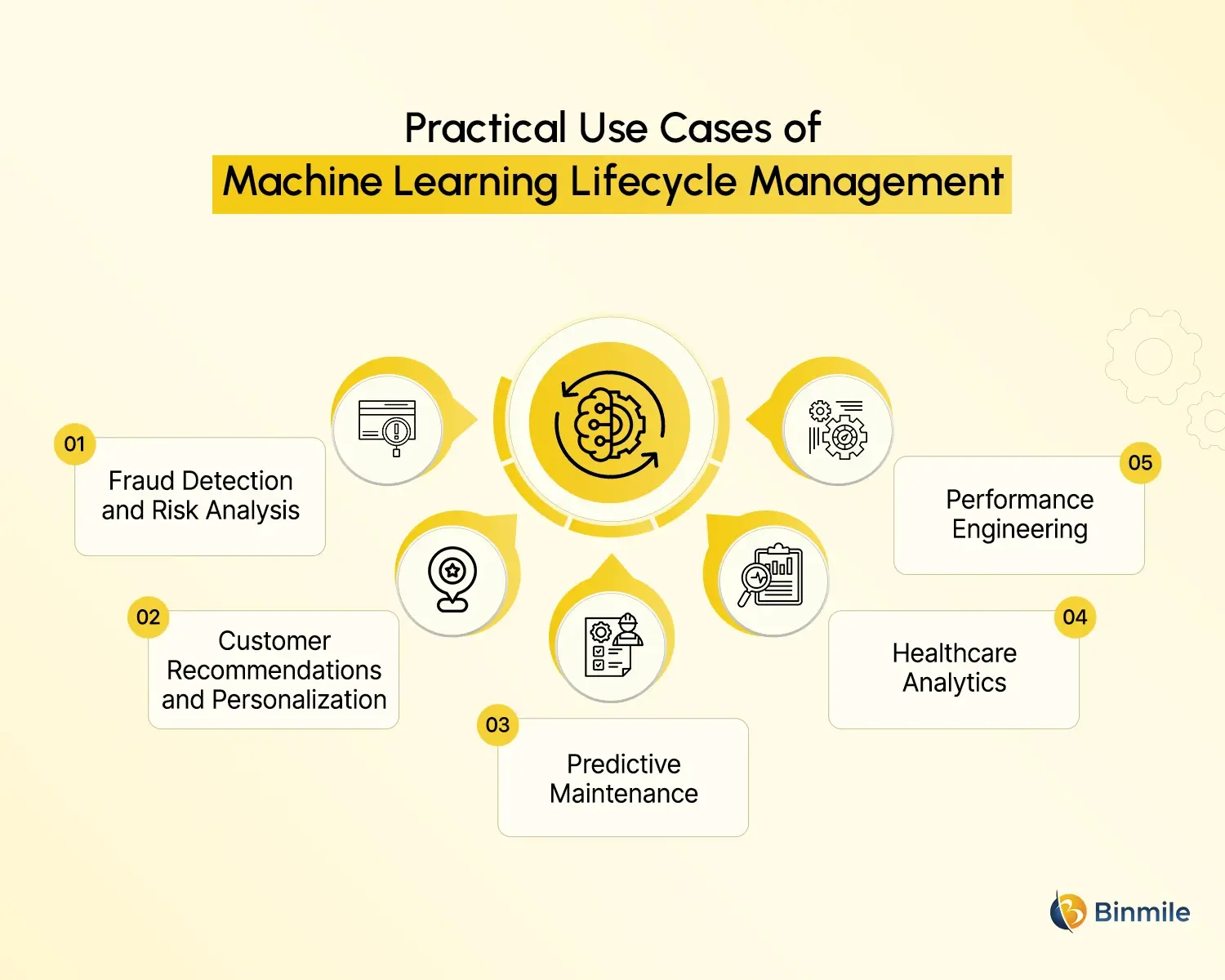

Enterprise Use Cases of Machine Learning Lifecycle Management

Modern Use Cases of Machine Learning are driving smarter operations and better business decisions across industries.

-

Fraud Detection and Risk Analysis

Artificial Intelligence and machine learning for fraud detection are being utilized by banks and other payment processors to identify and prevent fraudulent transactions, as well as to analyze potential credit risk and enhance their anti-money laundering operations. Since fraud trends can shift rapidly, these systems must be able to monitor continuously.

-

Customer Recommendations and Personalization

Retailers and online retailers utilize recommendation engines and behavioral analytics to enhance customer engagement and improve their overall conversion rate. These systems continuously retrain to adapt to the changing preferences of their customers.

-

Predictive Maintenance

Predictive maintenance using machine learning helps manufacturers avoid catastrophic equipment failures by being able to predict when the failure is going to happen and take action prior to it happening. This results in decreased downtime, increased operational integrity and reliability, and decreased maintenance costs.

-

Healthcare Analytics

Healthcare organizations are implementing machine learning to predict diseases, analyze medical images, and evaluate patient risks. Because of the many regulatory constraints facing the healthcare industry, machine learning systems must be governed and provide demonstrable, understandable results.

-

Performance Engineering

Organizations are increasingly adopting machine learning for performance engineering projects to better understand when infrastructure may fail, how to optimize workload distribution, and how to provide greater operational continuity across all digital systems.

Future Trends Shaping the Machine Learning Lifecycle

The future of the machine learning lifecycle is becoming more automated, intelligent, and enterprise-focused.

-

AI-Assisted Development

The development and management of machine learning systems have penetrated into AI due to the high-speed generative AI tools. Such AI-assisted development automates the processes of coding, debugging, testing, and feature engineering.

-

Real-Time Machine Learning

The organization is going beyond static historical datasets, building systems that can now learn continuously from live streaming data. This, in turn, improves response times and decision-making speed.

-

Responsible AI and Governance

With the AI regulations taking shape there enterprises have now pumped in enormous investments for the monitoring of fairness, better explainability systems, governing frameworks, and systems around bias detection to ensure the responsible uptake of AI.

-

Edge AI Expansion

There is a transition from deploying machine learning systems far away from devices in central static cloud environments to nearer applications. Edge AI reduces latency and adheres to strict real-time processing needs.

-

Autonomous Lifecycle Management

At some future point, ML lifecycle management becomes more autonomous, possible with AI systems monitoring, optimizing, and retraining themselves, thereby removing human guidance.

Want to improve model performance, accelerate AI deployment, and strengthen lifecycle management across your organization?

Building Scalable AI Systems Requires the Right Lifecycle Strategy

Success with enterprise AI requires far more than just developing a model. Entrepreneurs also need scalable infrastructure, reliable data pipelines, comprehensive governance frameworks, deployment automation tools, active monitoring systems, and ongoing optimization capabilities to ensure their AI projects are successful on an enduring basis with demonstrable ROI.

As organizations continue to scale their AI development across multiple departments, applications, and/or cloud environments, managing the complete machine learning lifecycle will become increasingly complicated. By providing scalable, end-to-end AI engineering solutions, cloud native development, computer machine learning lifecycle management, and intelligent automation tailored to meet the requirements of the new business environment, Binmile makes it easier for enterprises to manage this process throughout the entire machine learning cycle.

Whether your application is providing predictive analytics, detecting fraud, optimizing business performance, or developing AI-powered applications, using a structured lifecycle approach will greatly increase your client’s operational effectiveness as well as their potential for achieving long-term AI success.

Frequently Asked Questions

The machine learning lifecycle is the complete process of building, deploying, monitoring, and improving machine learning models. It includes data preparation, model training, deployment, monitoring, and continuous optimization for long-term performance.

MLOps focuses on operational management of traditional machine learning systems, while LLMOps is designed specifically for large language models and generative AI applications involving prompt management, inference optimization, and AI safety controls.

AI helps automate repetitive tasks such as data preparation, testing, monitoring, feature engineering, and retraining. This improves efficiency, reduces operational delays, and accelerates machine learning development workflows.

Popular tools include TensorFlow, PyTorch, MLflow, Kubeflow, Apache Airflow, Databricks, AWS SageMaker, and Azure Machine Learning for model development, deployment, orchestration, and monitoring.

The timeline depends on project complexity, infrastructure readiness, data quality, and business goals. Small projects may take weeks, while enterprise-scale ML systems can require several months.

Data quality directly impacts model accuracy, fairness, reliability, and business performance. Poor-quality data can lead to biased predictions, operational failures, and compliance risks.